1. Is Your Company Operating from an Industrial-Era Playbook?

2. Why Performance-Based Compensation Doesn't Work

3. Traditional Project Management Needs and Upgrade (This article)

Don’t worry—we’ve all done it. If fact, most of us are still are doing it. Actually, most of us are doing it and still think it’s okay to do it.

No, I’m not talking about sneaking in a little TMZ while we’re at work. I’m talking about using Microsoft Project or Excel to make a project plan—something far worse for productivity than the worker time lost by following the latest celebrity break-ups.

Okay, I admit it: I use Microsoft Project Gantt charts for planning small internal projects. And this isn’t really a problem because the time horizon for these projects is short, the complexity manageable, the impact of delays relatively minor, and the amount of uncertainty fairly limited. In short, it’s a simple tool for a simple problem.

But what happens when the project gets more complicated? When the environment in which the product operates is constantly changing? When deliverables are complex and require significant collaboration across teams and partners? When money is on the line and people’s careers hang in the balance? That’s when the Gantt chart starts to break down.

Under these stressful circumstances (which are the norm for many digital initiatives), it’s natural for our desire to exert control to increase, and with it, our desire to seek familiar artifacts that give us a feeling of control. When the going gets tough, the tough make project plans. Unfortunately, feeling in control and being in control aren’t the same thing. No doubt the ancient tribes who performed elaborate rituals to control the weather felt in-control, even though they weren’t.

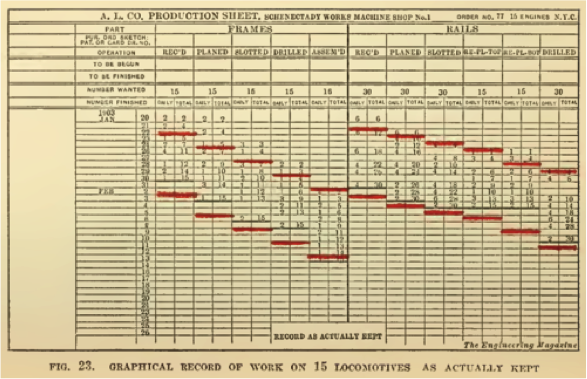

But before we discuss the pitfalls of traditional project management and it primary artifact—the Gantt chart—let’s review its history first. The Gantt chart was developed in the 1910s by Henry Gantt, an early pioneer in the field of scientific management. Although today we think of the Gantt chart as a tool to manage projects, it was originally designed as a general management tool to improve batch production in steel factories. The document gave factory supervisors visibility into how much work workers actually completed compared to the amount of work they were scheduled to complete. When used with “Production Cards,” that proscribed the amount of work each worker was expected to complete each day, the Gantt chart let supervisors know whether or not production was on schedule.

Old School Gantt Chart

Old School Gantt Chart

During the early twentieth century, this approach worked well. It excelled at measuring the progress of simple, discrete, independent tasks. After producing hundreds of batches of steel, managers had a pretty good idea how long each task took. If someone was behind, they could accurately estimate how long it would take to catch-up. Since factory workers were uneducated and had limited skills (in some cases they were children), they needed to be told precisely what to do and when to do it. Furthermore, the factory line was sequential and each step in the process was discrete, so coordination happened between management and workers, not between workers themselves.

In fact, this method, which laid the foundation for traditional project management, was so successful, it was used throughout the twentieth century, even as projects got more complicated. The Gantt chart was even used to construct the Hoover Dam (which came in ahead of schedule and under budget!).

Project Managements Gets Techie

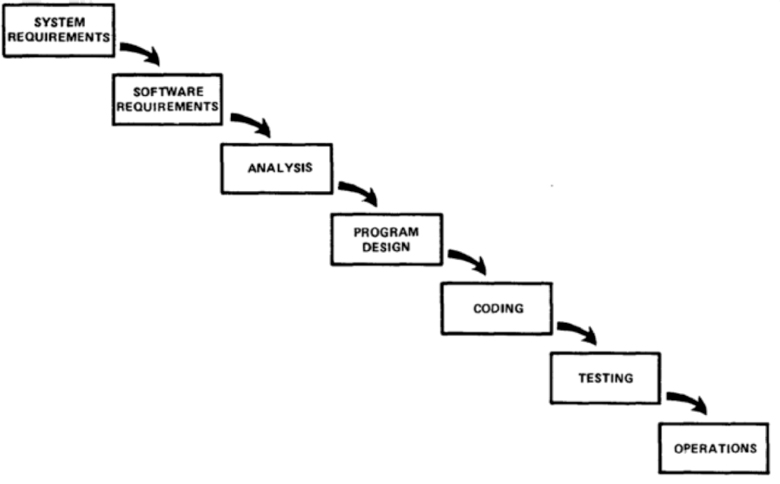

Later, when computers entered the scene in the second half of the twentieth century, project management turned its attention to the administration of software projects. In 1970, a Lockheed engineer named Winston Royce wrote a paper entitled “Managing the Development of Large Software Systems,” in which he outlined a sequential approach to software development. His paper included a nice little diagram that illustrated how requirements, design, coding, testing and maintenance should follow each other in a neat, orderly progression. The diagram resembled a waterfall, and so the methodology was later named (by others) the Waterfall method.

Winston Royce’s Waterfall Diagram

Winston Royce’s Waterfall Diagram

The Gantt chart and the Waterfall method fit together like peas and carrots. The sequential workflow and discrete stages of Waterfall mirrored perfectly the ordered assembly line of Henry Gantt’s factory. In fact, the analogy between manufacturing and software development was so powerful that later software development methodologies, like the Capability Maturity Model, also based their ideas on the factory workflow.

Indeed, things we’re looking bright in the era of bell bottoms and lava lamps. Gantt charts were taking care of business, computers started automating rote tasks and software development had a new methodology. All would have been perfect if not for one simple fact: the Waterfall method itself was inherently flawed. Apparently nobody noticed that Winston Royce warned_ in his own paper _that a sequential methodology like the one drawn in his diagram was a bad idea: “I believe in this concept, but the implementation described above is risky and invites failure.” Oops. People went straight to the picture, failing to read the paper itself which advocated for an iterative—not sequential—approach.

Making matters worse, as we transitioned from disco to grunge, software got a lot more complicated. Technology started evolving more quickly, computers became ubiquitous household appliances, and soon everything was connected together with a crazy global network originally designed to withstand a nuclear war. Then things really started to get out of hand. In the early 2000s, the cost of cancelled and failed software projects at companies like Nike, Kmart, CIGNA, HP and McDonald’s skyrocketed, reaching hundreds of millions of dollars. Yet despite the flood of epic software failures during this period, the industry never really questioned the Waterfall methodology itself. Instead they argued that the failures were due to inadequate requirements and lack of management attention. Which is to say, they doubled down on Waterfall: What was needed was _more _upfront documentation, and more micro-management from above. Hey, can we get those “Production Card” thingies online?

Pointing out that the Emperor has no Clothes

It was in this environment that a group of intrepid software engineers finally said enough is enough and put together a simple manifesto to stop the insanity. The Agile Manifesto, drafted in 2001, established a few simple principles that acknowledged that change is inevitable, not a planning flaw, and that more human collaboration was needed to make software better, not excessive levels of documentation. Agile was a 180 degree shift from traditional project management, and the teams who started using it overwhelmingly embraced it.

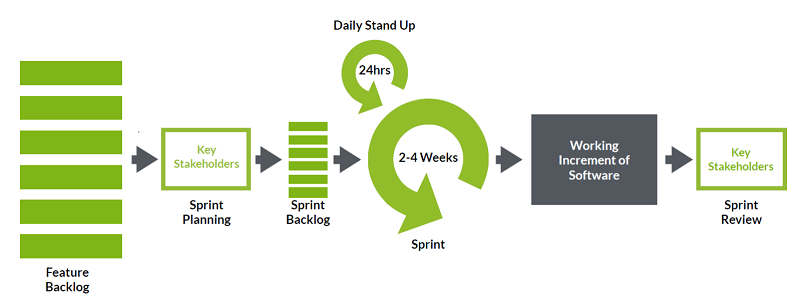

One of the reasons Agile became so successful is because it assumes change instead of denying its existence. Rather than mapping out all of the tasks upfront for the entire project in a Gantt chart —as is the practice in Waterfall—Agile assumes things are going change, and instead recommends simply starting off with a high level-list of desired features (called Epics in Agile parlance). Only those features that will be tackled during the next two to four week “Sprint” should get fleshed out in detail. Then, after the Sprint is over, the team gauges its progress by reviewing the tangible work-product, absorbing its learnings, and making the appropriate course corrections for the next Sprint.

The Scrum Process, a specific Agile methodology

The Scrum Process, a specific Agile methodology

This simple blueprint for managing projects proved to be extremely powerful because it handled change gracefully. By breaking down big projects into a small chunks, prioritizing work based on the business value it provided, and allowing adaption based on empirical results, Agile doesn’t meltdown when things change. Traditional project management, in contrast, maps out every task on a project upfront in painstaking detail, making project plans brittle to even small deviations. When changes inevitably occur, elaborate change management processes must be enacted to handle it. Change management committees, we were told, must be established to review the impact of all potential changes. Complex matrices of features must be maintained so that every change can be mapped back to affected features. And most importantly, if a change happened in your project, you were to acknowledge that you were a very bad person for introducing a “requirements bug,” and to atone for your sin, you were to increase the amount of documentation in your next project to make your requirements more “thorough.”

It was (and still is) ridiculous. I once had a client tell me (rightfully so) that the worst part of her year was reading the spec. Sad.

At some level though, the failure of traditional project management to manage complexity and uncertainty shouldn’t come as a surprise. Do we really think that a business practice developed a century ago to manage batch production in factories is the right tool to manage software projects in the Internet-era? Or that a 1970s methodology created by accident from the misinterpretation of a paper should be the guide for today’s technology projects? Probably not.

Let’s face reality: Things are different now. Back then, the problems were relatively simple (e.g. producing steel); now they are highly complex (e.g. developing sophisticated software on a mobile device that is always connected to a virtual datacenter via a distributed global network). Back then, management needed to micro-manage the actions of every worker; now workers must collaborate together to accomplish tasks. Back then, uncertainty was relatively low (e.g. the next batch of steel will be pretty similar to the last thousand batches); today there is a huge amount of uncertainty (e.g. Will my 1,000 store iBeacon deployment scale properly and integrate seamlessly with my new cloud-based inventory system? And will it be secure?). Indeed, times have changed, and so too must our approach to software project management.

Breaking Old Habits

But what about changing our approach to general management? As managers, don’t we face the same twenty-first century challenges that software engineers do? Don’t we also deal with high levels of uncertainty, constant change, increasing levels of complexity, and highly interconnected, multidisciplinary tasks? For the vast majority of us, the answer to these questions is yes. So it makes logical sense for us to leverage Agile for general management initiatives as well. Yet most managers still gravitate, habitually, toward familiar horse-and-buggy-era tactics:

- Directing initiatives from above, rather than formulating plans based on input from front-line workers.

- Instituting lengthy fact-finding and strategy committees to draft detailed requirements in advance, rather than introducing initiatives in smaller chunks, deriving business value earlier and course correcting along the way based on empirical results.

- Producing comprehensive (and fragile) project schedules up-front, detailing tasks and timelines, rather than setting a general direction and adapting as things change.

In the same way Waterfall project managers cling to a system invented when change was minimal, uncertainty was rare and employees were unskilled, general managers today are hooked on an outdated approach that helps them feel in control, even when they aren’t.

So, unless you are actually a steel plant supervisor: Stop running your business like a 1900s factory!

Okay, I realize this is easier said than done. We all have managers, boards and investors to report to, and it’s unlikely they’re interested in hearing about some philosophical, Agile Manifesto, mumbo-jumbo. They want to know what they’re going to get, when they’re going to get it, and what it will cost. They need to quantify investments and stay apprised of progress—and that’s not going to change. So what the hell are we supposed to do without a project plan?

First, keep calm and welcome to the twenty-first century.

Second, take advantage of the new tools we didn’t have in the twentieth century. By taking an Agile approach, and breaking down big initiatives into bite-sized chunks, we gain the ability to measure progress based on actual, empirical results, rather than the theoretical progress implied by a Gantt chart. Empiricism is a powerful tool because it is a far more accurate way to measure progress.

Seeing is Believing

The best evidence of progress we have in Agile is the work-product itself. In contrast to Waterfall, which typically produces lots and lots of documents upfront intended to represent the final work product, Agile starts producing work product from the very first Sprint. That means in Agile, you know exactly where you stand because you can actually see your progress. For example, if a manager wants to start an initiative to increase the efficiency of her department, instead of conducting extensive interviews, formulating a grand strategy and drafting an elaborate plan upfront, an Agile manager might convene one or two meetings with her team to surface the obvious pain points, work with the team to devise a few initial solutions, and get to work immediately to start implementing them.

This approach has several advantages. First, it gets the improvements “in market” faster, allowing you reap its benefits sooner. Second, it creates a sense of accomplishment which is a powerful team motivator. Third, it allows you to learn and adapt for the next Sprint. Finally, it gives you a sense of your pace: If you know it took you X weeks to accomplish Y, then you have a much better sense of how long it’s going to take to complete the remaining tasks, A, B and C.

In Agile, this pace is captured in a formal metric called Velocity. Agile teams estimate granular features (called User Stories) using an arbitrary unit of measure called Story Points. The team establishes the value of a Story Point by selecting a few similar User Stories and assigning them an arbitrary number of Story Points, thereby establishing a baseline. Then, other User Stories are assigned Story Points based on their size relative to the baseline. Once this is done, Agile teams estimate the number of Story Points they believe they can complete in a single Sprint, thereby establishing an estimated Velocity.

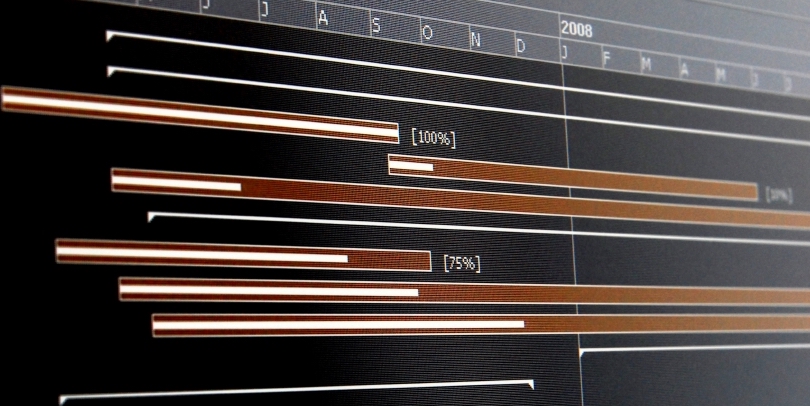

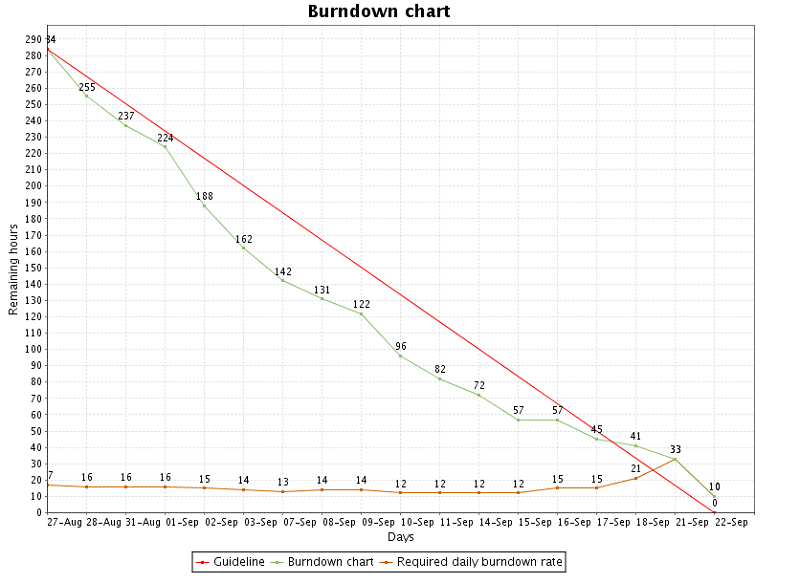

A Burndown Chart is great way for managers to gauge progress. Jira produces charts like this one out-of-the-box.

A Burndown Chart is great way for managers to gauge progress. Jira produces charts like this one out-of-the-box.

Why use Story Points instead of hours or man-days? Because people are terrible at estimating time. They just are, and everybody knows it. (For a great book on how bad people are at estimating and why, read The Black Swan by Nassim Nicholas Taleb—a great read). However, as it turns out, people are pretty good at estimating relative size. Agile exploits this basic human characteristic by developing estimates based on what people are naturally good at.

Story Points also give us a powerful way to gauge progress throughout a project. By measuring the number of Story Points a team is able to complete by the end of each Sprint (the team’s actual Velocity), we can compare it to the number of Story Points estimated at the beginning (the team’s estimated Velocity), and measure the accuracy of the team’s estimates. More importantly though, we can measure the pace at which the team is actually able to complete work. Once we know the team’s Velocity we can get a general sense of total project duration by using the following equation:

Total Story Points for all User Stories / Velocity = The Total Number of Sprints the project is expected to take.

As a 15-year veteran in the digital business, I can tell you from hard-earned experience that gauging progress based on empirical data is a far better approach than using the “paper progress” derived from a Gantt Chart or project schedule. When my neck is on the line (which it basically is every day), I advocate for an Agile approach every time.

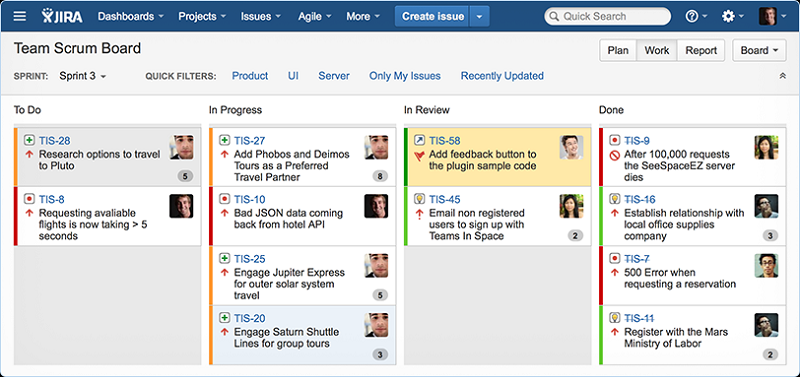

Another great way of watching progress is through the Agile Work Board, which shows all the tasks for the Sprint and what their status is. Using a tool like JIRA Agile makes it easy. I spy on my team’s progress all the time this way.

Another great way of watching progress is through the Agile Work Board, which shows all the tasks for the Sprint and what their status is. Using a tool like JIRA Agile makes it easy. I spy on my team’s progress all the time this way.

Maintaining the Illusion of Control: Management’s New Crack

But everything has trade-offs. One of the things you give up when embracing an Agile management approach is the ability to tell your boss or board the exact scope of your initiative and the exact timeline for completion. You get many wonderful things instead: a much more accurate gauge of progress, faster time-to-market, more motivated teams, the ability to adapt to change, and the reduction of waste by focusing on deliverables instead of document by-products. Yet, you still can’t say exactly what the end product will be or how exactly you’ll get there.

But here’s the dirty little secret: You can’t do that with traditional management practices either. Yes, we can create elaborate Gantt charts and project schedules that offer the illusion of specificity and control, but they don’t really work. And let’s face it: in our heart of hearts we all know this. Things are just too complex and uncertain today.

Unfortunately, our desire for control and fear of uncertainty are so profound, it crowds out our desire to solve the problem in the first place. Managing the progress of the deliverable has become more important than solving the problem itself. And what’s crazy is that most executives would rather spend more, wait longer, and absorb greater risk merely to gain the illusion of control. Such is the power of the corporate rain dance.

So it is our responsibility as innovators to shift the conversation away from talking about delivering the thing, and instead start talking about how we are going to address the problem. It means acknowledging that we may not know today what the final solution will be, but that we’ll likely discover it along the way. And most importantly, it means recognizing that we can’t control the uncertainty of the modern universe, but that we can get things done in spite of it.

Once we drop the industrial-era playbook and start operating like twenty-first century managers, we can start getting things done faster and with less pain. And with all the extra time we’ll have on our hands from being so efficient, we can finally get back to surfing TMZ during the day.